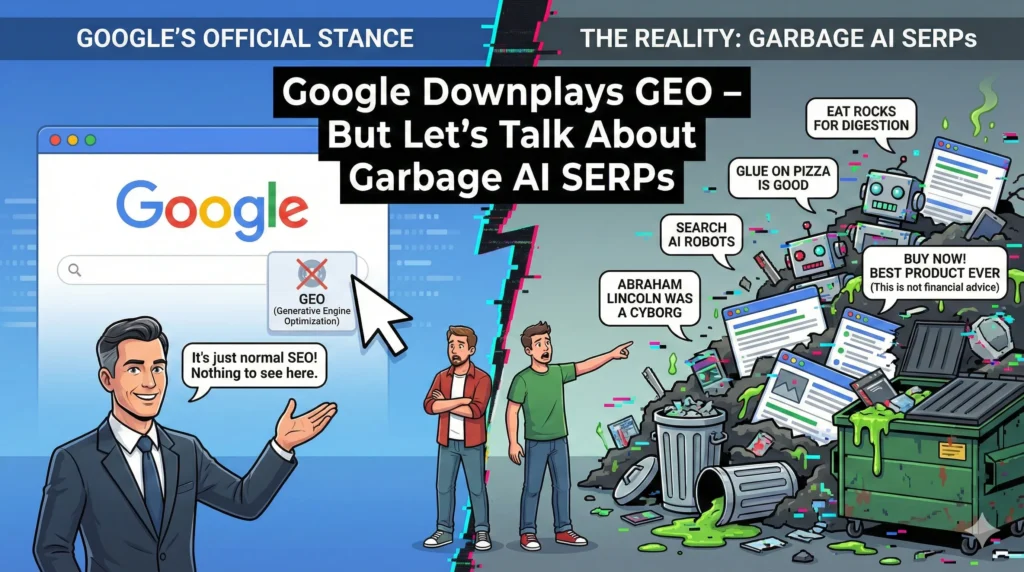

In the ever-shifting landscape of search engine optimization, practitioners often find themselves grappling with conflicting signals from Google. On one hand, the search giant offers highly specific, granular advice—tips about structural elements like content chunking, heading hierarchy, or minor usability enhancements. On the other hand, a far more significant, existential crisis looms over the quality of the search results themselves: the overwhelming influx of low-quality, algorithmically generated content, often dubbed “garbage AI SERPs.”

The underlying tension here is clear: are we focusing on trimming the hedges when the foundation of the garden is rotting? While advice on optimal content structure is always welcome, many in the SEO community argue that discussing minor optimization tactics is a diversion from the critical problem of search engine results pages (SERPs) being clogged by content generated cheaply, quickly, and often without genuine experience or verifiable accuracy.

This begs the essential question: If Google is downplaying complex, quality-focused signals like “GEO”—which implies a specific type of expertise or authority—while simultaneously battling a tidal wave of synthetic text, what does this truly mean for the future of authoritative publishing and the fundamental user experience of search?

The Distraction of Granular SEO Advice

Google frequently provides detailed guidance aimed at helping publishers improve crawlability and basic user experience. A recent example is the emphasis placed on structuring content effectively, often referred to as “chunking.” This practice involves breaking down large blocks of text into digestible segments using headings, lists, and short paragraphs.

The Role of Content Chunking in Modern SEO

Content chunking is, fundamentally, good writing practice. It improves readability, which is a known, indirect ranking factor because satisfied users spend more time on a page and bounce less often. Furthermore, well-structured content is easier for Google’s systems to parse, making it more likely that key information will be selected for rich snippets or featured placement.

However, when Google highlights such elementary aspects of publishing, it can feel like a strategic redirection. For experienced SEO professionals, focusing on the optimal paragraph length is a low-level optimization. The industry’s primary concern should be the integrity of the information presented. If the underlying content is synthesized, factually weak, or merely recycled boilerplate dressed up by an AI, no amount of perfect chunking will elevate its true value.

The core frustration among publishers is that while they meticulously adhere to Google’s guidelines on structure, their deeply researched, human-written articles are often outranked by mass-produced, high-volume AI content that lacks real experience but happens to be formatted adequately.

Decoding the Downplay of “GEO”

The term “GEO” in this context is subject to interpretation, but in the realm of modern search quality analysis, it is often understood to refer to specific, demonstrable **Geographic Expertise Optimization** or perhaps a broader **Genuine Expertise Optimization**. This signal is closely related to the Experience, Expertise, Authoritativeness, and Trustworthiness (E-E-A-T) framework that Google has heavily prioritized.

When reports emerge that Google is “downplaying” or minimizing the immediate importance of such a deep quality signal, it raises red flags. Why would Google deemphasize the very qualities it claims to value most—genuine, verifiable authority—at the exact moment when the internet is being flooded with content that lacks these traits?

The Challenge of Measuring Genuine Expertise at Scale

One possible explanation for downplaying a concept like GEO is the technical difficulty of measuring it consistently and scalably across billions of documents.

1. **Synthetic Expertise:** Generative AI models excel at mimicking authoritative language. An LLM can produce text that reads exactly like it was written by a local expert or a seasoned professional, even if the model itself has never set foot in the geographical area or performed the task being described.

2. **Algorithm Confusion:** If Google struggles to differentiate between highly polished, synthetically generated niche expertise (GEO) and genuinely human-vetted content, temporarily reducing the weight of that signal might be a way to avoid accidentally penalizing legitimate publishers while they refine detection methods.

3. **Broadening the Signal:** Google may be attempting to generalize its ranking signals, relying more on site-wide authority and established trust signals (the A and T of E-E-A-T) rather than hyper-specific expertise indicators that are easily gamed or imitated by large language models (LLMs).

Regardless of the specific technical reason, the perception remains: Google appears to be prioritizing operational simplicity or universal applicability over the rigorous defense of genuine, localized, or niche authority, leaving the door wide open for high-volume, low-integrity publishers.

The Crisis of Garbage AI SERPs

The proliferation of generative AI tools has fundamentally altered the economics of content creation. Where content used to be a costly asset requiring time, research, and human input, it can now be generated instantly for near-zero marginal cost. This shift has resulted in a massive surge of articles, product descriptions, reviews, and informational pages being pumped into the search index.

The consequence is a measurable degradation in the overall SERP quality, manifesting in several critical ways:

Symptom 1: Information Redundancy and Homogeneity

When AI models train on the same data sets and are prompted with similar queries, the output tends to converge. This leads to what search quality raters term “information redundancy.” Users searching for an answer increasingly find ten different articles saying the exact same thing, often using similar phrasing and structure. This homogenization severely diminishes the value of the search result and frustrates users looking for unique insights or alternative perspectives.

Symptom 2: The Hallucination Effect

Generative AI models are designed to predict the next plausible word in a sequence, not to verify facts against the real world. This process leads to “hallucinations”—confidently presented factual errors or invented data points. When publishers automate content generation without robust human fact-checking, these errors propagate rapidly across the SERP, polluting the information ecosystem. Trust in Google’s ability to serve accurate, reliable information erodes when key positions are held by content riddled with verifiable falsehoods.

Symptom 3: The Erosion of Experience (The First ‘E’)

Google’s introduction of Experience (E) alongside Expertise, Authoritativeness, and Trustworthiness was a direct response to content written without hands-on knowledge. AI-generated content struggles inherently with demonstrated experience. It can describe a process perfectly, but it cannot convey the subtle, nuanced difficulties, troubleshooting tips, or personal failures that only come from having actually performed the task.

For users seeking genuine reviews or practical how-to guides, encountering experience-less AI prose is a massive downgrade in user utility. The search results become superficially correct but functionally useless.

The Battle Against Scale: Why Google’s Filters Are Failing

The critical challenge for Google is not necessarily identifying AI content; it’s identifying *low-quality* AI content at the incredible scale it is being produced.

Publishers are becoming increasingly sophisticated. They don’t just paste raw AI output; they use tools to “humanize” the text, employ hybrid editing models (AI generation followed by minimal human polish), and spread the content across vast networks of programmatic websites. This makes the job of Google’s spam and quality algorithms exponentially harder.

The Need for Contextual Quality Scoring

Traditional spam detection focuses on obvious tactics (keyword stuffing, cloaking). The new challenge requires contextual quality scoring. Google must look beyond basic grammar and readability and ask deeper questions:

* Does this content provide new information not already found in the top 50 results?

* Does the author demonstrate tangible credentials, primary research, or proprietary data?

* Is the content backed by positive user signals (e.g., discussions on social platforms, citations from high-authority sources)?

When Google focuses on advice like chunking, it risks reinforcing the idea that superficial adherence to structural rules is enough to succeed, even if the underlying content is manufactured dreck designed purely for ranking arbitrage.

A Sustainable Content Strategy in the Age of AI Garbage

For serious publishers and content marketers, the influx of poor-quality AI SERPs is not just a threat; it is an immense opportunity to differentiate themselves. When the baseline quality of search results drops, genuine, high-effort content becomes even more valuable to the user and, consequently, to Google’s long-term ranking signals.

The path forward requires doubling down on the very qualities that AI struggles most to replicate: genuine E-E-A-T and unique value.

1. Prioritize Demonstrable Experience (The First ‘E’)

If your content describes a process, prove that you or the author have actually done it. This means incorporating high-quality, original photos, unique data visualizations, video evidence, case studies, and proprietary findings. Experience cannot be synthesized; it must be documented. For service-based sites, this translates to clear author biographies showcasing real credentials, not just general descriptive titles.

2. Focus on Primary Research and Unique Data

The most reliable way to create content that AI cannot replicate is to use data that the LLMs haven’t been trained on. Conduct original surveys, analyze proprietary sales data, perform unique experiments, or aggregate data sets in novel ways. Content that introduces net-new information into the public domain will always stand out from content that simply rephrases existing web sources.

3. Cultivate Authoritative Voice and Trust Signals

In an environment of low trust, publishers must actively build confidence. Ensure clear citation protocols, robust fact-checking processes, and transparency regarding your sources. Trustworthiness (T) is arguably the most resilient factor against automated content, as it relies on real-world reputation, editorial oversight, and sustained quality over time.

4. Embrace Niche and Local Expertise

While Google may be downplaying a broad GEO signal, hyper-specific, verifiable niche expertise remains crucial. If you are writing about local regulations in Austin, Texas, or niche farming techniques, prove your local connection. This specificity creates a barrier to entry for generalized LLMs and reinforces your E-E-A-T score within that small, high-value ecosystem.

The Call for Systemic Clarity

The ongoing debate surrounding Google’s minor advice versus the major problem of AI SERP quality highlights a critical need for greater transparency from the search giant. Publishers need to understand definitively how core quality signals like E-E-A-T are weighed against the sheer volume and structural adherence of AI-generated content.

The SEO community is willing to adapt to structural requirements like content chunking, but they require assurance that these efforts are not being undermined by a system that rewards quantity over quality, speed over substance, and synthetic prose over genuine human experience. Until Google’s algorithms can consistently and accurately filter the “garbage AI SERPs,” the focus of authoritative publishers must remain fixed on creating indispensable content that, by its very nature, stands beyond the reach of automated imitation. The true competition in 2024 and beyond is not merely against other human publishers, but against the relentless, low-cost tide of machine-generated mediocrity.